The backwards AI implementation pattern (and why it fails)

Most AI implementations don't fail at the engineering layer. They fail at the layer nobody assessed before the engineering started.

The internal AI tool failed at week twelve. Not catastrophically – no outages, no press releases about the wrong kind of milestone. It just stopped being used. Adoption flatlined. Employees returned to their usual workarounds. The vendor got a stern call.

The company in question is a medium-sized enterprise in the knowledge industry. The tool that wowed them in the demo was supposed to revolutionise how the company accessed, shared, and collaborated on internal knowledge.

Instead, it scaled chaos. Incoherence. Inaccurate answers. More noise than signal.

The root cause? The knowledge base the tool was feeding from was itself chaotic. Contradictory terminology. Duplicated resources. Conflicting definitions and messaging across departments.

The AI faithfully synthesised everything it found – which is to say, it faithfully synthesised the confusion it was given to work from.

AI doesn’t create organisational knowledge. It reveals how well it already exists.

The recommended fixes from the vendor: better data quality upstream. Consider creating a knowledge graph.

Both are the right tools for a different class of problem to this one, though.

Data engineering is the right tool for AI applications that process quantitative data. Predictive analytics, financial forecasting, fraud detection, and so on.

It solves for quantitative systems: forecasting, fraud detection, optimisation.

But knowledge AI operates on language.

That’s a different class of problem.

Similarly, knowledge graphs only add value once the foundational fundamentals of canonical substance, clean taxonomy, and content modelling have been applied to the knowledge base.

If either condition is present, the constraint isn’t engineering.

It’s editorial.

Most knowledge-industry companies begin by framing AI investment decisions as an engineering conversation, with the first-order action being: build a system or procure one.

Then, when performance doesn’t meet expectation, they work backwards through failure layers until they reach the root cause: the organisation’s knowledge material is the bottleneck, not the AI itself.

The transformation they’re chasing is more epistemological than technical.

This is an article about the sequence that prevents that.

The sequence less-followed

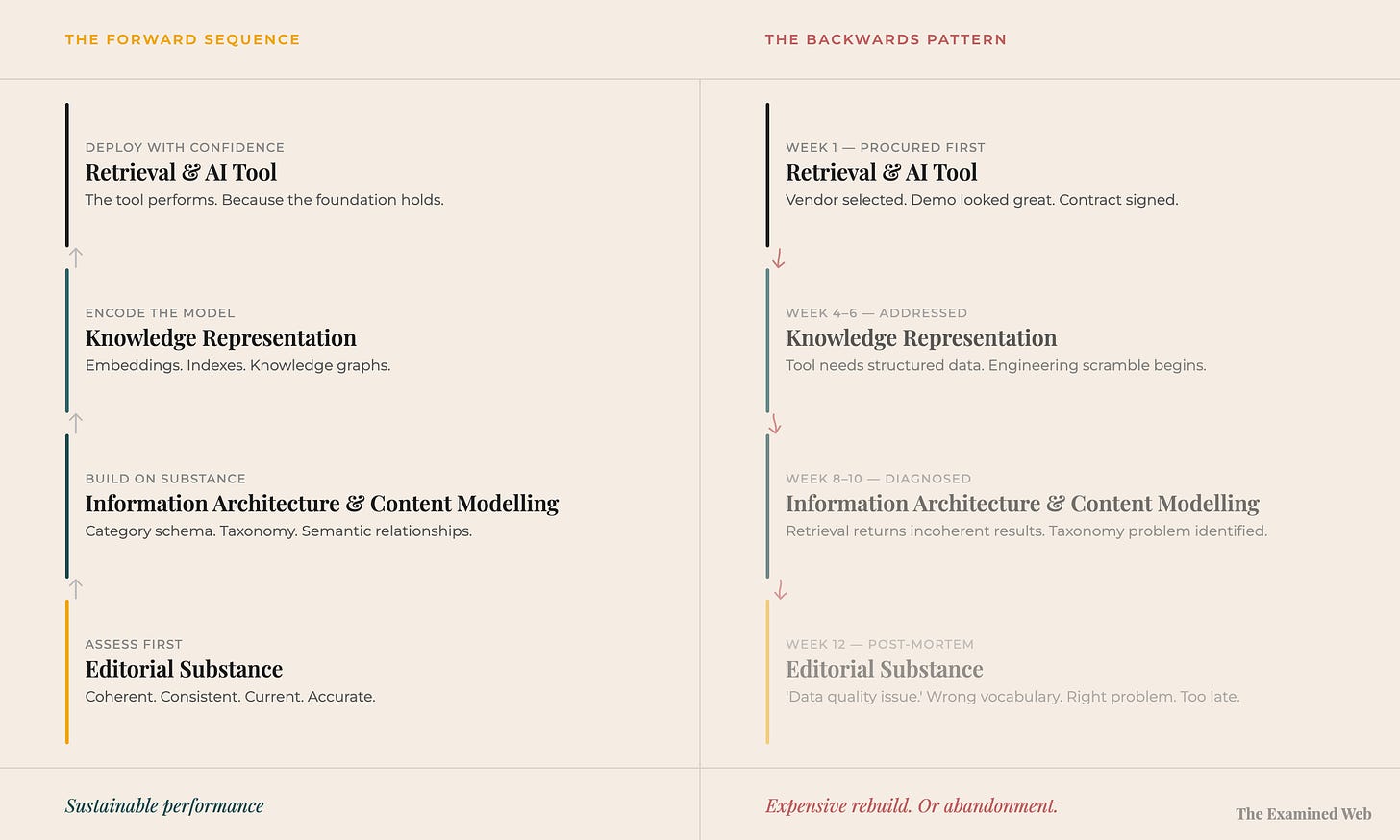

There is a sequence to knowledge AI implementation that works. It runs in this order:

1. Editorial substance

Content is canonical, coherent, accurate, current, and consistent enough, not only for human users to easily understand and use it, but for AI users too. This is the foundational layer. It’s the least exciting investment, but nothing can actually be built above this foundation if it’s broken.

You cannot structure incoherence. You cannot retrieve meaning from noise.

2. Information architecture & content modelling

Once the substance is sound, this layer structurally organises it: mapping the territory so retrieval systems can navigate it. Information architecture sets the organising logic – category schema, taxonomy, navigational labelling, metadata. A content model defines what different types of content are, how they relate to each other, and what they mean within the broader business domain. Together, they produce the semantic architecture that knowledge representation can encode.

3. Knowledge representation

The encoding of that modelled content in formats that retrieval and AI systems can process: embeddings, indexes, knowledge graphs, context packages. This layer is where the engineering work begins – but it is entirely dependent on having something worth encoding.

4. Retrieval and context

The AI tool. The interface. The thing that gets demo’d in procurement conversations, evaluated by vendors, celebrated in launch announcements… and blamed in post-mortems.

The sequence matters because each layer is a prerequisite for the one above it.

Retrieval cannot outperform the knowledge representation feeding it. Knowledge representation cannot impose structure on content that has none. Content modelling cannot organise material that is incoherent at the substance level.

Remove the foundations and the ceiling drops. Categorically.

The tool doesn’t perform slightly worse. It fails in a way that no subsequent engineering investment can fix, because the constraint sits below the engineering layer entirely.

What’s happening instead

Almost nobody is currently following the sequence that would enable successful knowledge AI implementation.

In practice, the process runs in the opposite direction. An organisation identifies an AI technical capability (not necessarily analogous with an actual business opportunity) – usually through a vendor demonstration, a competitor announcement, or a board directive. Then, they procure a tool.

Layer 4 arrives first.

Layer 3 is addressed as implementation begins, when the tool’s appetite for structured data becomes apparent.

Layer 2 becomes visible when the retrieval results are incoherent and someone diagnoses a taxonomy problem.

Layer 1 – the substance layer – surfaces last, in the post-mortem. And even then, often misdiagnosed as a “data quality issue” in the absence of any content expertise sitting at the table.

By that point, the pattern is set.

The organisation has already invested in tooling and knowledge representation – building technical infrastructure on top of knowledge base content that was never assessed.

The fix requires going back to the beginning: what does this content actually say, and is it fit for purpose for the tool’s use case?

But that’s not what happens.

What happens is a doubling down on what the organisation already knows how to do: more engineering.

More structure. More systems. More attempts to impose order downstream.

The result: a second round of spend, a longer timeline, and the persistent belief that the problem must still be technical.

It isn’t.

The problem began before the engineering started.

Why the sequence produces a predictable outcome

The backwards pattern produces a specific failure mode that is observable, consistent, and well-documented – even if rarely diagnosed correctly.

When retrieval is built on fragmented content, the AI synthesises the fragmentation.

If Marketing uses five product names and Product uses two, and none of them overlap exactly, the tool will return contradictory answers because the source material is contradictory.

That’s not “hallucination”. The model is working correctly and within a perfectly manageable context window.

The problem is editorial source quality – which the technical post-mortem will call a data quality problem, because that’s the vocabulary available.

When knowledge representation is built before content modelling, the semantic architecture reflects whatever structure the content had when it was indexed – which is usually the structure of accumulated accident, not intentional design.

Adding embeddings on top of an unorganised, bloated knowledge base indexes the disorganisation and bloat.

And when content modelling is attempted without editorial substance underneath it, the taxonomy becomes an exercise in mapping multiple content items that shouldn’t be there in the first place.

You can assign ‘clean’ metadata to content that contradicts itself. You can seamlessly link relationships between thousands of content assets via a vector database and have it beautifully represented on a knowledge graph – even where half of the corpus added is irrelevant, contradictory, off-message, strategically at cross-purposes, outdated or just plain incorrect.

The structure works. The retrieval quality is excellent.

It’s the actual source content that’s the problem.

Why the backwards pattern keeps happening

The backwards sequence is a structural outcome of who leads AI implementation decisions and what vocabulary they use.

Many of the organisations advising enterprises on AI readiness – major consultancies, system integrators, and a lot of AI vendors – literally built their frameworks for quantitative applications and according to best practices defined through the lens of technical architecture.

Predictive analytics. Fraud detection. Recommendation engines. Process automation.

These are applications where “data quality” is precisely the right diagnosis. Where clean pipelines and normalised schemas directly determine performance.

Language-based AI – enterprise search, knowledge retrieval, document synthesis, question answering – is a different category.

The input isn’t numerical records. It’s the content of the corporate brain: knowledge bases, the website, reams of documentation, policies, external and internal communications assets, procedures, policies.

Whether that content is clear, consistent, and task-oriented is not a data engineering question. It’s an editorially-defined user experience question.

Consulting frameworks developed for the first category were applied to the second without adaptation.

And both the vocabulary and the frameworks they describe – data readiness, data governance, data quality – have been carried across, unchanged.

Because the vocabulary frames the diagnosis, the diagnosis stays in territory that data engineers can comfortably address – even when the actual problem sits way beyond what they can comfortably solve.

The actual builders of frontier AI models don’t actually have this problem. Quite the opposite.

Anthropic, OpenAI, Google, and the companies that train AI through human feedback – they value the role of content and knowledge management expertise as being at the heart of everything they’ve built. They hire content designers, content strategists, content reviewers, editors, linguists, academics, and subject domain experts.

Training AI to produce coherent outputs requires linguistic judgement and taste. The enterprises implementing those models face the same requirement.

But the consultants advising them come from a discipline that doesn’t have those capabilities – and so frames the problem in terms of the capabilities it does have.

This is how a rational system produces a consistent error. Every participant follows their own incentives correctly. The outcome is still illogical.

What this means for the next investment

Most organisations reading this have already been through at least one backwards implementation. Or are in the middle of one.

The instinct, coming out the other side, is to find a better tool. A more capable model. A vendor with a more robust retrieval architecture. These will not fix a substance problem. They will perform the same failure more expensively.

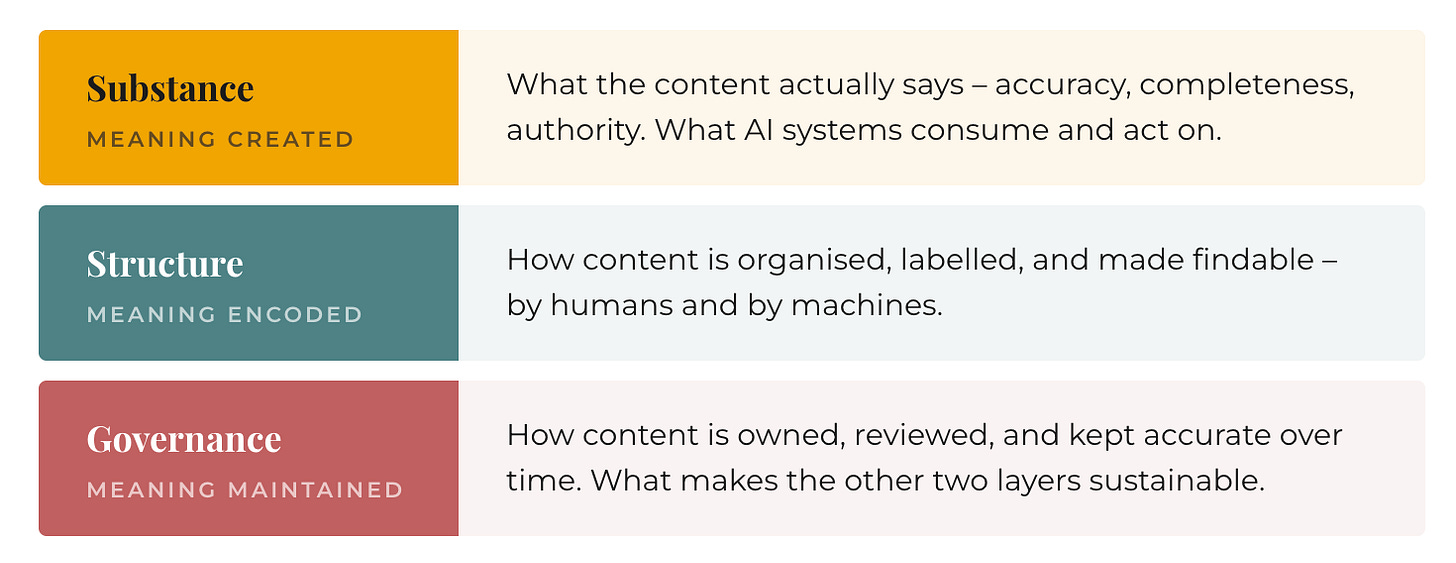

Before the next procurement decision, a content infrastructure assessment changes what gets measured. It works backwards from the question the AI will be asked – not the question of whether the technology is capable, but whether the content it will synthesise is capable.

Three things it surfaces:

Substance. Is the content coherent, consistent, and accurate enough for AI to synthesise without producing wrong answers? Are there conflicts between what Marketing says and what Product says? Are policies updated across all the documents that depend on them? Is the knowledge base 30% signal and 70% accumulated noise?

Structure. Is the content organised in a way that allows retrieval to work? Not “is there a taxonomy” – there usually is. Is the taxonomy doing the job: connecting related content, distinguishing overlapping concepts, making relationships machine-navigable?

Governance. Are there processes that will prevent the content from degrading the day after implementation? A knowledge base that passes a readiness assessment today will fail within six months without operational ownership. AI doesn’t improve content governance. It exposes its absence.

These questions should be asked before any retrieval architecture is specified, before any vendor is evaluated, before any contract is signed.

They’re not more important than the technical questions. This is not another ‘content-first’ rallying cry. Their answers determine whether the technical investment will land.

You cannot engineer your way out of a lack of editorial substance, coherence, and quality.

The engineering layer assumes quality exists. Content strategy creates it. These are not sequential options. They are a dependency chain.

The forward sequence

The backwards pattern is expensive to correct once you’re inside it. The forward sequence is not particularly complicated before you start.

Assess the content infrastructure before specifying the technical architecture. Understand what the AI will actually ingest before deciding how to encode it. Identify the editorial gaps before commissioning the retrieval system.

This sequence requires bringing content expertise into conversations that currently exclude it – not as a ‘nice-to-have supplement’ to the engineering conversation, but as the prerequisite upstream input that makes downstream engineering decisions meaningful.

It also changes the timeline. Organisations that follow the forward sequence spend longer in assessment before procurement. They invest earlier in work that has no interface, no demo, no vendor celebrating the contract.

It looks slower. It isn’t. It's simply the only way to build a system that actually works..

The implementations that deliver measurable return after twelve months are not the ones with the most sophisticated retrieval architecture.

They’re the ones that knew what their content could support before they built anything on top of it.

Most organisations try to fix AI at the system layer.

The constraint doesn’t live there.

It sits below it – in what the organisation actually knows, and how well it knows it.