Beyond chatbots: Why practical application of AI in business still feels so elusive to knowledge workers

AI isn't too advanced for non-technical leaders to sponsor, evaluate, or govern. The vocabulary explaining it has just skipped the layer where the work actually lives.

Most non-technical knowledge workers have a clear mental model of what ‘the thing’ of AI is: the chatbot.

You type a question, it gives you an answer. The quality varies. Sometimes it sounds clever. Sometimes it does a little LLM-vibed improv jazz solo when you least expect or need it. But the shape of the interaction is recognisable.

Using AI = chatting back-and-forth with a model.

If that’s AI basecamp, then advancement from here is where a lot of non-tech leaders start to feel the altitude hit.

‘Basic explainers for beginners’ often include concepts such as: neural networks, retrieval augmented generation, context windows, agentic orchestration, and ETL pipelines. Not exactly breezy.

The unspoken instinct in the room is usually some version of: sounds impressive... but I have no idea what we are actually talking about.

Everything and absolutely nothing. All at once.

At this point, AI stops feeling like a tool sitting on the desk and starts feeling more like the weather – everywhere, consequential, somehow shaping the day, but very difficult to point at.

Business leaders are not lacking literacy, here. They are being given the wrong explanation entirely, which gives them absolutely nothing tangible to point at.

What decision-makers need is to understand where AI is relevant to specific business goals.

Too often, they instead get abstract platitudes or plumbing specifications: promises about productivity on one side, technical taxonomies on the other.

The promise gets inflated before the business context has been made clear.

The end result: we’ve built a highly complex, frontier technical solution... now we just need to go and find a business/user problem to fit it.

The missing layer is business-context clarity: what kind of work AI systems can genuinely enhance or even transform, what internal knowledge those systems depend on for those value opportunities to be realised, and what would make them reliable enough to plan, judge, and govern.

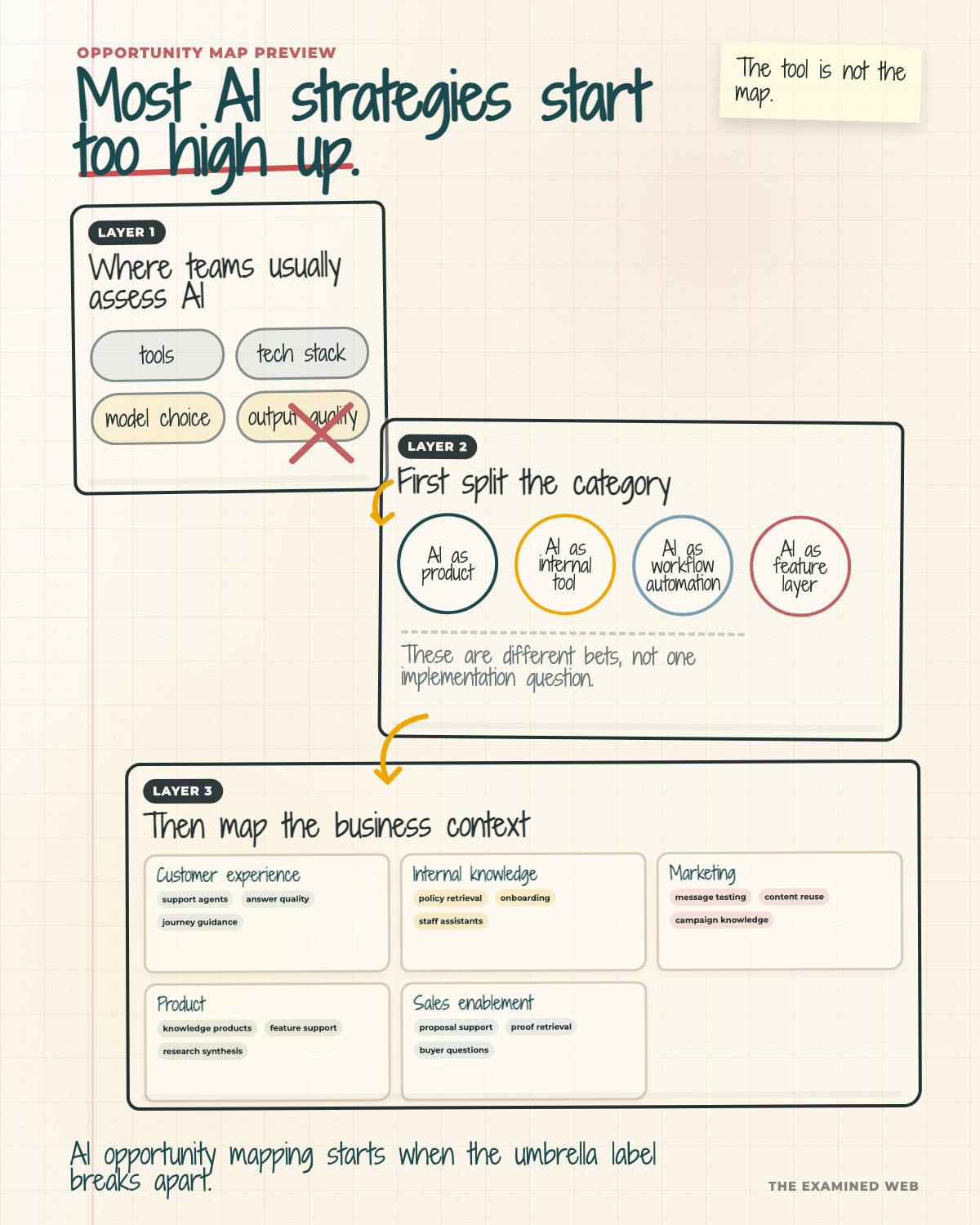

That requires a different kind of taxonomy for conceptualising what AI is, not through the lens of its technical foundations, but through the lens of the practical business problems it helps to solve.

AI is not one thing

The phrase ‘AI’ is now carrying far more weight than any single phrase can responsibly carry.

Inside the same organisation it can mean:

AI as an external-facing product – where the LLM capability is what the customer is buying, accessing, or being served by. The AI is the product, not an addition to it.

AI as a product feature layer – where AI sits inside an existing product as an additive capability that enhances how the product works, but isn’t the reason the product exists.

AI as an internal tool – where LLM capabilities are pointed at the work employees already do – retrieval, drafting, synthesis, analysis, internal Q&A. The user is the organisation, not the customer.

AI as workflow automation – where the system moves work through a process with reduced human checking. This is where ‘agentic AI’ lives in operational terms, stripped of the theatre – and where the operational stakes of weak content infrastructure compound at machine speed.

These are not minor variations on a unified theme. They are wildly different bets, with different time horizons, different commercial logic, different operational risks, and very different demands on what the organisation already has underneath them.

But when everything sits under the same label, knowledge workers cannot tell which conversation they are actually participating in.

The opportunities to apply AI across marketing, product, customer experience, sales, and internal operations are so varied that any continuous discussion of ‘AI transformation’ as a singular programme of work at boardroom level is, in practice, absurd.

But this is exactly what is continuing to occur in a lot of meeting rooms right now. Two department heads are sat in the same meeting about the organisation’s AI strategy and are nodding along at the same sentence, seemingly in total agreement – but silently picturing different things in their heads.

One is imagining a customer-facing release in the next quarter. The other is imagining a year-long internal rollout of agentic-powered workflow automation.

Nobody explicitly disagrees on anything. Because nobody has been forced to be specific about the reality they’re internalising. The ‘unified strategy’ survives the meeting, then immediately starts dying in delivery.

This is one reason AI strategy so often sounds smooth at the leadership layer and fragmented at delivery. The vocabulary is doing the wrong job.

The vocabulary problem gets worse, not better, in the most-discussed corner of the field: agentic AI.

Agentic AI adds theatre to an already vague field

Agent language makes the situation worse, because it dresses up a fairly mechanical process – reading files, routing instructions, running scripts, calling tools – in human-like clothes.

That framing deepens the black-box theatre instead of explaining what the system is actually doing.

Sidenote: from a marketing perspective, this is nothing short of genius. Because it amplifies the sense of improbable alchemy around AI behaviour rather than revealing the more prosaic machinery underneath.

The ‘AI colleague’ or ‘AI team’ framing leads people to imagine a capability they cannot see being built: where its memory comes from, what it has access to, who decides when it stops, and what rules it follows.

None of these become clearer once the system has been personified to ‘act as your COO or executive assistant’.

Which is partly the point. The language is not trying to explain the magic. It is inviting everyone to get lost in it.

But strip the theatre back and the important questions are the same kind you would ask of any standard operating process.

What can the system see?

What is it allowed to do? What rules govern it?

What tools does it have access to?

What processes does it follow?

Where is it supposed to escalate?

Who notices when it goes wrong?

Those are not magical questions. They are pretty boring ones that have more to do with knowledge management and operational workflow definition than AI tool engineering. They also happen to be the questions that decide whether agentic work is safe enough to deploy at all.

The second split most AI conversations skip

AI becomes much easier to understand the moment it is described by the work it is doing in the business, rather than by the most impressive technical component sitting inside it.

But the four categories are not enough on their own.

An internal tool for Marketing is not the same strategic object as an internal tool for Product. Workflow automation in Customer Experience is not the same risk as workflow automation in Internal Knowledge Management. A feature layer can be harmless in one context and dangerously misleading in another, because the visible AI surface is only the final expression of the work underneath.

The business context changes the knowledge the system depends on, the risk it creates, who has to maintain it, and what would make the output reliable enough to use.

This is the layer of specificity most AI conversations skip.

An organisation does not adopt AI once. It considers many possible AI bets across different areas of work. The same umbrella label can cover cross-department knowledge retrieval, customer support, research synthesis, product documentation maintenance, and sales response support.

These are not five versions of the same implementation question. They are five completely different operating questions.

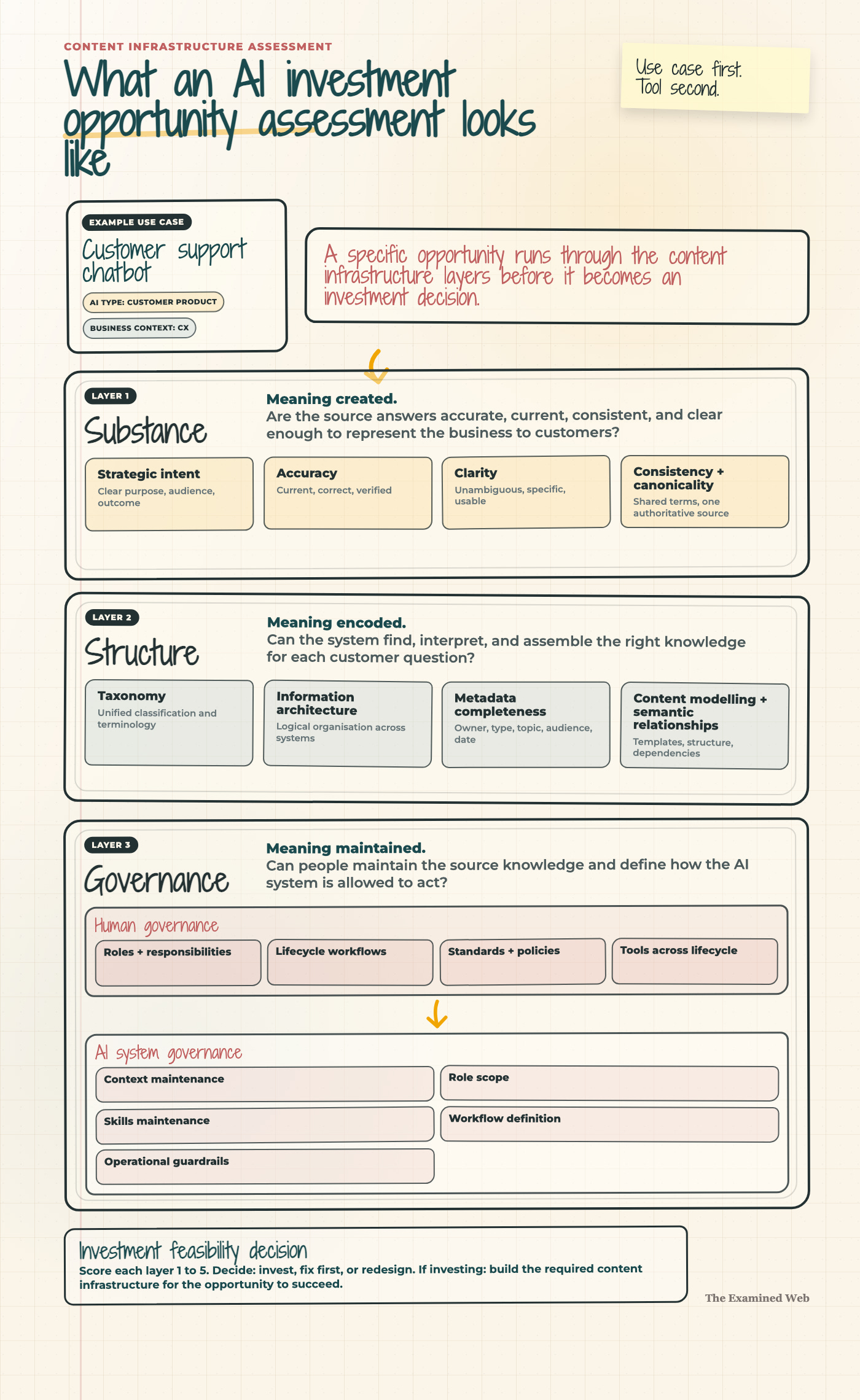

This is where the three-layer content infrastructure model becomes useful.

Substance is what the content says.

Structure is how that knowledge is organised.

Governance is how it is maintained.

Applied to typical business department contexts…

Internal Knowledge Management

Typical example: compliance and regulatory validation.

This is workflow automation, not retrieval – the system runs against communications, documents, or decisions automatically, flagging what falls outside policy. Governance carries the pressure: who owns the rule set, what gets escalated rather than actioned, and who keeps the validation logic current when regulations change. Substance decides whether the rules being enforced reflect what the regulator actually requires today, rather than what someone believed last year. Structure decides whether the right policy clauses, precedents, and evidence connect at speed. Without all three, the system either nods through breaches or floods the team with false positives – both expensive failures.

Customer Experience

Typical example: AI customer support agent.

This is an external-facing product – the customer is in a service relationship with the system, not just being served by it. Substance is acute because the system is answering customers in real time. If product details, policies, or troubleshooting steps in the source material are inconsistent or out of date, the AI scales those errors directly into customer conversations. Structure decides whether the right answer can be retrieved at the right moment of need. Governance decides what the system is allowed to commit to, when it escalates, and who maintains the source material that everything else depends on. The risk is that the organisation has exposed unresolved content problems directly to customers.

Marketing

Typical example: content repurposing and adaptation.

This is usually an internal tool. The visible appeal is straightforward – turn one piece of content into many, across audiences and channels. But Substance carries most of the pressure here. The system can only adapt what is there. If the source article is thin, the LinkedIn version is thinner. If brand voice is inconsistent across what already exists, the system amplifies the inconsistency at speed. Structure decides whether the system can tell approved sources from convenient drafts, and whether assets, claims, and audience mappings can be retrieved cleanly when needed. Governance decides whether the marketer using the tool is pulling from the approved library or from whatever happens to be lying in their inbox.

Product

Typical example: in-product contextual guidance.

This is a feature layer – AI sitting inside an existing product, surfacing help, prompts, or next-step suggestions tuned to where the user is in their journey. Structure carries the pressure: the system needs to know which features depend on which prerequisites, which problems map to which solutions, and how the user’s current state connects to the rest of the product surface. Substance decides whether the guidance is right when retrieved – out-of-date or contradictory help is worse than none. Governance decides who maintains the guidance as the product changes, and who notices when patterns reveal it has stopped working. Get this wrong and the AI feature becomes a friction multiplier for the users it was meant to help.

Sales Enablement

Typical example: RFP intelligence and approved response support.

This is an internal tool with unusually high commercial stakes. Substance and Governance carry the pressure together: the system needs approved claims, current case studies, accurate product language, and clear boundaries around what sales teams can promise. Structure determines whether the right proof point or response language can be found at the point of need. The failure mode is not usually dramatic. It is a proposal assembled from almost-right fragments, each reasonable in isolation, collectively nudging the organisation into a promise it cannot quite stand behind.

The four types tell you what kind of AI conversation you are in.

The business context tells you what the system depends on.

The infrastructure assessment tells you whether that dependency is strong enough to carry investment, and what would need to be built for the opportunity to succeed.

The point is not to create a more elaborate taxonomy for its own sake. It is to stop AI investment being discussed at a level where the words still sound coherent but the work underneath has already disappeared.

Once the work is visible, the question changes. Not “which AI tool should we adopt?” but “what kind of AI bet is this, where does it sit in the business, and what would have to be true underneath for it to work reliably?”

The gap knowledge workers are sensing

Knowledge workers who feel that more advanced AI remains elusive are not behind. They are responding sensibly to an explanation environment that has skipped a layer.

Non-coders do not need to master the builder’s vocabulary before they are allowed into the conversation. Their pre-existing judgement sits close to the heart of the matter:

Understanding what the system is trying to do, the underlying knowledge it depends on, and where the corresponding content needs reworking to make that knowledge reliable enough for the system to use.

People cannot responsibly sponsor, evaluate, or govern what they cannot tell apart. If ‘AI’ means four things to four departments, the organisation is not making four decisions. It is making one decision and trusting the differences will resolve themselves later. They won’t.

Make the work visible before making it advanced

The next stage of AI literacy won’t come from more prompting tips, more frontier announcements, or more architecture diagrams aimed at builders. It will come from surfacing a richer variety of AI opportunities through the pre-existing language of the knowledge work itself.

Example

In relation to [specific departmental function]…

Intent: what valuable functions could the system actually perform for us?

Operational knowledge: what knowledge will those functions depend on?

AI scope: what precise actions will the system be allowed to take?

Human scope: what will still need human-in-the-loop judgement?

Content infrastructure dependency: what would have to be true about the underlying content for this to work reliably?

Rigorously answering each of these questions leads to a well-rounded spec for agentic capability within that business unit. But note: not one of the above questions is related to technical development, tool or feature build.

They are operating questions attached to content dependencies. Any reasonably experienced knowledge worker can answer them within their field of specialism. There is no good reason AI should be the exception to that rule.

Because once those questions are asked routinely, AI stops being weather. It becomes a set of distinguishable bets, each with its own demands, each open to assessment.

The chatbot remains the easy part. Everything beyond it becomes far easier to question, plan, and govern once it is described from the layer the work actually lives on.

Thanks for reading.

If this way of thinking about AI feels closer to the real work, the Knowledge AI Opportunity Map takes the next step. It maps thirty use cases across five business contexts, so the conversation moves from generic ambition to the actual opportunities, dependencies, and constraints in front of you.

Or if you’d like to discuss what practical AI investment looks like – calibrated against real organisational constraints rather than vendor theatre or hype – you can find me at examinedweb.com.