Architecture is not infrastructure

Content infrastructure has three layers. AI strategy is mostly investing in one.

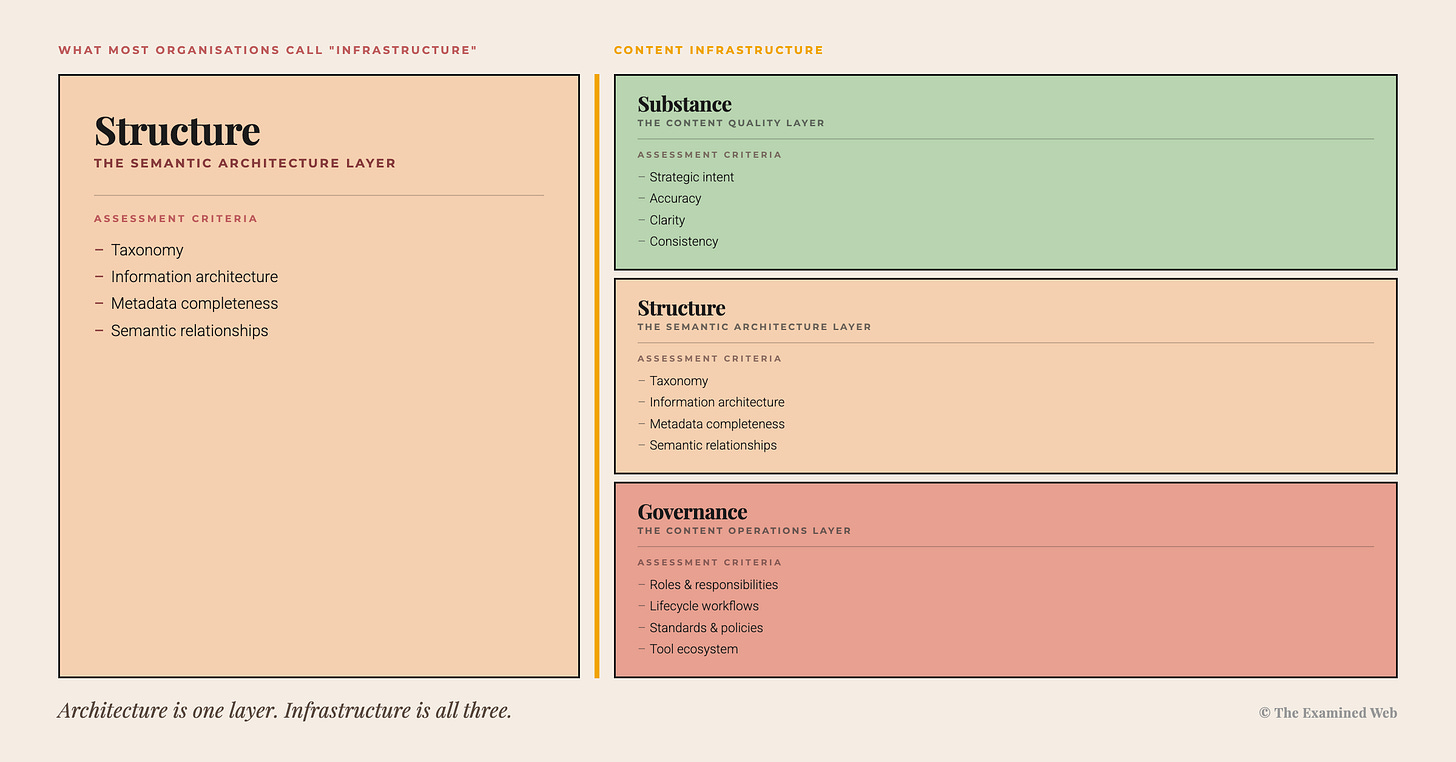

“Content infrastructure” – for AI, or otherwise – is increasingly being used as a synonymous term for content architecture work.

But it isn’t the same thing.

To be clear, semantic layer engineering, linked metadata, structured context, taxonomy design, ontologies These are essential capabilities carried out by brilliant people.

They are also only one layer of the multi-faceted content ecosystem that I refer to as content infrastructure.

Content architecture or content engineering is an integral part of content infrastructure. It is not the whole of it.

Collapsing the two leads to an expensive category error. And right now, the collapse is what’s happening in most AI discourse.

Why architecture rose to the surface

AI strategy is currently shaped primarily by engineering disciplines. Not a criticism – an observation of a pattern that played out in the web era, too.

Engineering defines the technology. So engineering defines the vocabulary. And for a period, before adjacent disciplines catch up and assume authority over specific use cases, engineering effectively gatekeeps every aspect of how the technology gets applied.

The web went through this transition visibly.

1995: The internet is here. A team of developers will help us figure out how to build a website. They’ll manage it. They’ll own every aspect of it, in fact.

2005: A dedicated website team with no coding capability manages the website using a CMS. Obviously.

AI is at 1995.

When engineers evaluate what “content infrastructure” means, they pattern-match to what their discipline recognises. Semantic layers. Structured context. Taxonomy modelling. Linked data. Explicit knowledge representation. These have direct analogues in how engineers already think about systems. So they land immediately. “Ah right – LLMs require a semantic layer of engineering. Makes sense.”

“Editorially review, cut down, curate and revise your bloated, inconsistent, low-value knowledge base content” has no engineering analogue. Whatsoever.

It sounds like someone else’s job. It sounds like a much ‘softer’ job. A job without scientific rigour, at that.

In a system where funding, vocabulary, and decision rights sit inside engineering functions, architecture will always rise first. Not because it’s wrong – but because it’s most legible to the functions currently defining the vocabulary.

The result is a systematic distortion of what content infrastructure actually requires, driven not by evidence but by disciplinary resonance. Architecture rose to the surface because it speaks engineer. The other layers sank because they don’t.

This is Engineering Bias producing a measurable market outcome.

Rail infrastructure isn’t just tracks

Let’s (casually, as we all do every now and again when we’re in a philosophical mood, right?) think about what rail infrastructure actually is.

Tracks. Overhead cables. Stations. Vehicles. Maintenance schedules. Staff. Ticketing systems. Safety protocols. Operational governance.

Remove any one of those components and the system fails. No cables means no power. No maintenance schedules means vehicles break down. No operational governance means the whole thing runs erratically regardless of how well-laid the tracks are.

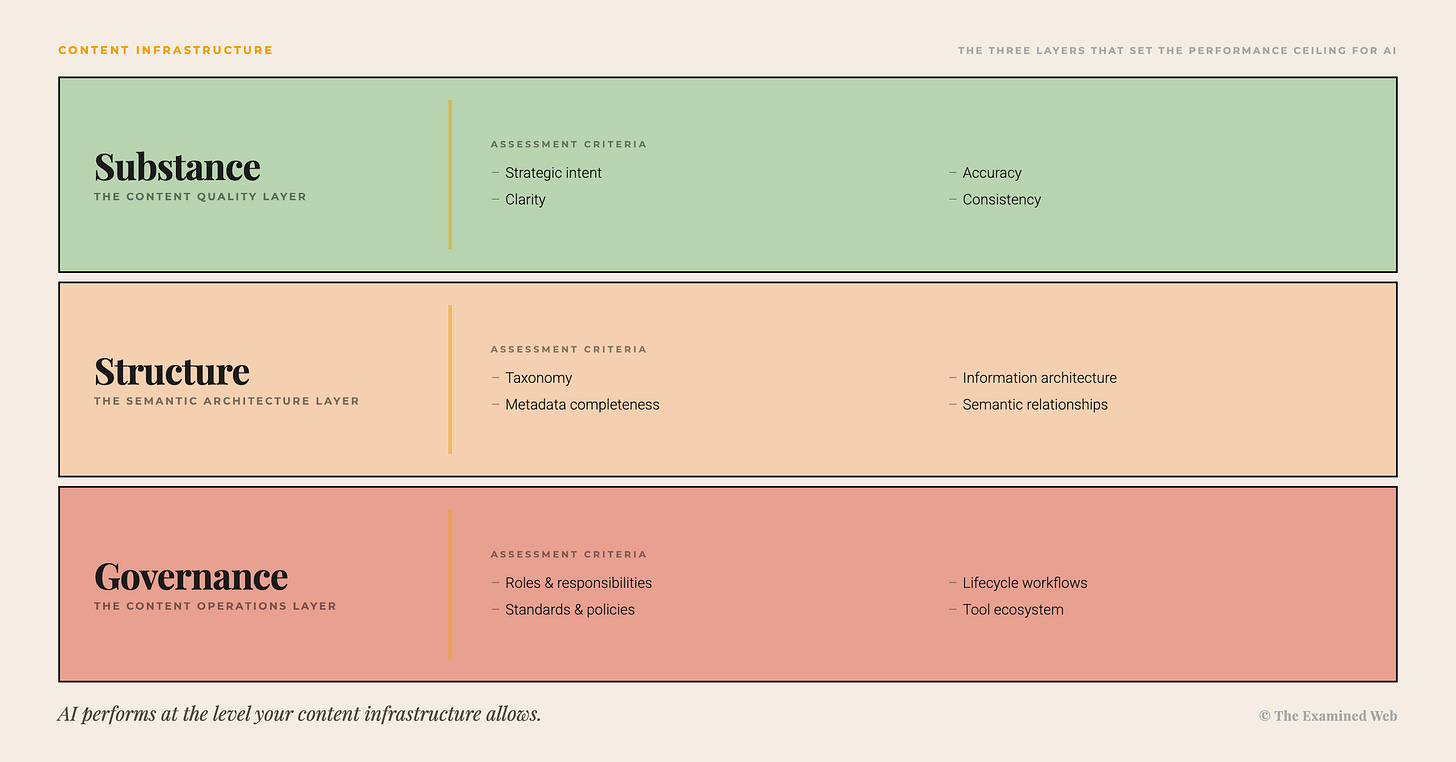

Content infrastructure works the same way. Three layers. Each dependent on the others.

Substance is the editorial layer – whether content is accurate, clear, consistent, relevant, and purposeful. Does the same product get described the same way across Marketing, Product, and Support – or does it have four names, three sets of specs, and two contradictory pricing structures? Can a system trust what it’s being fed?

Structure is the architectural layer – taxonomy, metadata, content models, information architecture. Whether content can be found, filtered, and connected. This is what content engineers build. It’s essential work.

Governance is the operational layer – who owns what, how content gets maintained, what happens when something changes. Without it, whatever you build in the Structure layer degrades the moment organisational reality asserts itself.

These layers operate in inter-dependence, rather than strict hierarchy or sequencing logic. They constrain and enable each other. Governance gaps degrade structure over time; structure gaps make substance harder to maintain at scale; substance failures mean the architecture is organising the wrong things.

Neglect any one layer while investing heavily in another and you’re not truly building infrastructure.

You cannot semantically engineer meaning out of nonsense

For most organisations, the root cause preventing their content from being fit for knowledge AI isn’t that it’s not semantically mapped.

It’s that the content (by machine standards if we’re being polite; but in all reality, by most human standards if we’re being truthful) is bad.

Not structurally lacking. Editorially lacking. The basics: unclear, bloated, circular, irrelevant, dense, impenetrable information. Half of which was the product of either internal politics or corporate vanity (or both), rather than the result of what anyone actually needed to know about, care about, or do anything with.

And if that’s the experience of a human reader on the average corporate website, customer support knowledge base, employee intranet – what chance does an AI stand of making sense out of it?

Linked data is a graph of semantic relationships between entities. An entity is a node. A node contains meaning.

What happens to the graph when the nodes contain the type of editorial bloat and waffle described above?

When information is inconsistent, unclear, duplicative across departments, contains non-canonical messaging, and conflicting definitions, and term variances, and basic discrepancies in core product and service names…

Is that a semantic connection and contextual retrieval problem? Yes. But more fundamentally? It’s a core meaning problem that originates in the basic production of the substance, not in the structure that’s wrapped around it.

Even an elegantly constructed semantic graph doesn’t resolve that.

Structure work amplifies whatever’s in the substance layer. Which is enormously valuable when the substance is sound. But a liability when it isn’t.

I’ve seen this in practice. Enterprise search tools deployed on content that contradicts itself across departments. The tool works. The results are reliably unreliable. Users stop trusting it within weeks. The implementation gets blamed. The real problem – that the actual substance of what’s being queried is itself, inherently incoherent – goes unexamined.

Increasing signal means decreasing noise

There’s a related insight that follows directly from this.

One of the most powerful things most organisations can do to prepare their content for knowledge AI isn’t necessarily to semantically map it. It’s to cut a significant proportion of it.

Semantic architecture applied to content bloat doesn’t resolve the bloat – it encodes and indexes it. AI retrieves the redundant alongside the relevant, synthesises across contradictions it has no way to reconcile, and produces output that faithfully reflects the input. At speed.

Humans compensated for this for decades. The redundant departmental update that nobody read or used in the first place… nor retired. The five-year-old policy document that still ranks in search. The three contradictory product descriptions that exist because Marketing, Product, and Support each wrote their own takes, in silo.

Human skimmed past all of it and extracted the signal anyway. In spite of the bloat. Inefficiently, but functionally.

AI can’t do that. Every orphaned asset, every politically-motivated page, every duplicate that survived a migration because deleting things ‘felt risky’ – all of it enters the corpus as signal.

It ingests with equal weight — no organisational memory, no basis to decide which of two contradictory descriptions is authoritative, no way to recognise an orphaned asset without a governance signal.

It will then produce output that looks like ‘bad AI’. It isn't. It's accurate AI, reporting faithfully on bad content. The AI says: you are bloat. Therefore, I will become bloat.

Increasing signal sometimes means decreasing noise. Not adding structure to it. That’s an editorial decision. It requires editorial judgement. No taxonomy schema or graph substitutes for someone deciding what the organisation actually needs to communicate – and retiring the rest.

Editorial curation is itself a form of context engineering. Maybe the most powerful form of context engineering available. But nobody’s really talking about it. Why? Because it doesn’t sound engineering enough.

The case for completeness

None of this is an argument against content engineering. The argument is for completeness.

Taxonomy design, IA, metadata systems, linked data – structure layer expertise is essential, and it requires specialist skill that most content strategists don’t have. The organisations doing this work well are ahead of most of the field.

An organisation that has invested seriously in semantic architecture – taxonomy design, linked metadata, content models – but left substance and governance untouched hasn’t completed its content infrastructure work. It has completed one layer of it.

The architecture will surface contradictions faster. It will make the substance problems more findable, not less. And without governance in place, the architectural work degrades the moment the organisation starts changing – which it always does.

Each layer makes the others work. Structure-layer engineering succeeds or fails based on what’s in the substance layer – and degrades over time without the governance layer maintaining what’s been built. That’s not a criticism of any particular layer. It’s a systems observation that each layer is important – and inter-dependent, for the whole to succeed.

Content infrastructure is a complete system. The discourse is describing one layer of it as if it were the whole – and organisations are making investment decisions on that basis.

The architecture will tell them what’s missing. It just won’t tell them in time.